Toxic Comment Classification

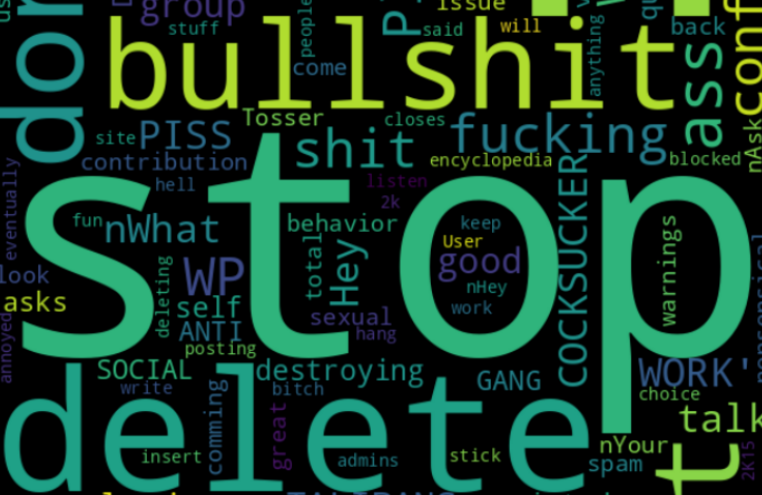

One area of focus is the study of negative online behaviors, like toxic comments (i.e. comments that are rude, disrespectful, or otherwise likely to make someone leave a discussion).

The challenge of this project is to build a model capable of detecting different types of toxicity like threats, obscenity, insults, and identity-based hate using a dataset of comments from Wikipedia’s talk page edits.